Atmos Basics

What is Dolby Atmos

Bed Audio

Object audio and Object metadata

Atmos objects considerations

Passthrough audio

Endpoints and buses

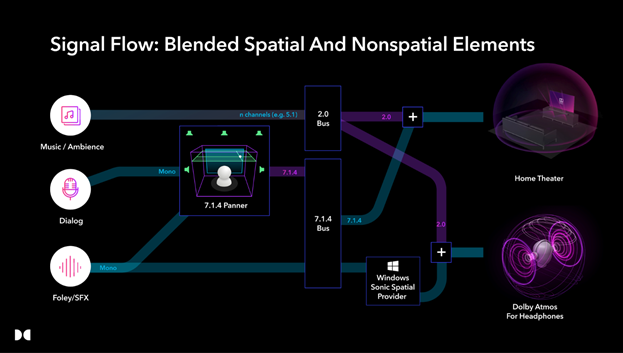

Consumer playback systems

Differences between games and linear media

Game objects vs Atmos objects

Objects and positional accuracy

Atmos fold downs

Supported platforms

Cost and Attribution

What is Dolby Atmos?

Dolby Atmos is an immersive audio format that provides a compelling listener experience by surrounding the listener with sound. In addition to the traditional 5.1 or 7.1 surround setups, Atmos introduces height channels to enable sound from above to increase aural envelopment. These are represented by the 4 at the end of 7.1.4. The 7.1.4 nomenclature denotes seven ear-level channels around the listener, one LFE (low frequency effects) channel, and four overhead channels. It also adds Dolby Atmos objects to the mix, which are individual sound channels added to the 7.1.4 bitstream with dynamic x,y,z coordinates, amplitude and spread meta-data. Initially introduced into the cinema, then into home theater, Dolby Atmos is available in games for the full Xbox family from Xbox One through the Series X|S, PlayStation 5, PC and mobile devices.

Bed audio

With traditional multichannel audio, channels correspond to specific speaker locations such as the 5.1 or 7.1 Dolby Surround layout. If, for example, the mixer wants the sound to come from the Left Surround speaker, they bus or pan the audio to the left surround channel. If a mixer wants the audio to appear as though it is coming from between the Left Surround and Left speakers, they pan between the two to create a phantom image. Traditional multichannel audio is utilized in Dolby Atmos; these channels are referred to as “Bed” audio. Bed configurations can range in width from Stereo to 7.1.4.

Object audio and Object metadata

In addition to Bed audio, Dolby Atmos introduces the concept of Audio Objects, which are routed out separately from the bed channels. Object Audio includes panning information in the meta-data, represented as X, Y, and Z coordinates (the Z-axis being elevation or height). These coordinates are dynamic and are updated with every frame of the game.

Object audio and their associated metadata are kept on separate buses from the Bed audio. In the end-user's device they are rendered in the correct position for the individual device’s speaker configuration and capability. This allows for the ability to address individual speakers in a configuration to increase panning resolution beyond what is possible in a discrete channel-based system (ex 5.1 or 7.1.4). When using Dolby Atmos for Headphones each Audio Object has its own HRTF convolution applied to it, resulting in more accurate positioning of the sound.

Atmos objects considerations

If there are more audio objects called than are available on the system, the additional object will render in the bed channels instead of on its own object. It will still pan around the 3D space, but just not get it’s own unique HRTF processing on headphones. On the PC and Xbox One, 20 Atmos objects are available for playback using HDMI, and 16 for Dolby Atmos for Headphones. In Wwise, System Audio Objects are allocated using a first in, first out prioritization.

Additional information:

Spatial Sound for Developers - Win32 apps | Microsoft Docs

Passthrough audio

Audio should be routed to a passthrough bus when you don't want any additional positional HRTF filtering applied to the signal when audio is binauralized for stereo headphones. Music or UI sounds that you don't want spatialized would-be good candidates to route to this bus.

Endpoints and buses

The number of audio channels that are output for a game are dependent on the endpoint of the gamer's system. An endpoint is the layer of a platform's OS that manages the final processing and mixing of the game sound, rendering it to the appropriate format before it is output to a hardware device, which could include HDMI for a stereo TV, Atmos enabled Audio Video Receiver (AVR) or soundbar, or a USB or 1/8th" headphone jack.

Consumer playback systems

Consumers can experience Dolby Atmos in home theater setups with an Audio/Video Receiver (AVR) that supports Atmos, ranging from 3.1.0 up to 11.2.6 configurations. Atmos soundbars have also become very popular and may include separate subwoofers and rear speakers. Some systems (including TV’s, soundbars and home theater speakers) incorporate separate speakers that bounce sound off the ceiling, which are another solution to provide the height content.

Many gamers play using headphones, and Dolby Atmos for headphones provides an excellent solution for them. The headphone solution takes in the 7.1.4 channels of audio and individual Atmos objects, then virtualizes and binauralizes them using HRTF processing so the audio is perceived as coming from all around the player. In many cases this is an excellent solution to experience games in Dolby Atmos.

Dolby Atmos for Headphones

Dolby Atmos for headphones enables sound to come from all around the player, including height, through any set of stereo headphones. It uses a technology called a HRTF processing (Head Related Transfer Function) to give the listener the perception of hearing sound sources around their head. It does this by modelling the time difference between sounds hit either ear, and the acoustic shadowing caused by our pinnae (outer ear), head and torso.

When the bed channels are virtualized for headphones using Dolby Atmos for Headphones, you could think of them as virtual speakers arrayed around the listeners' head, using binaural processing (HRTF filtering) to place each virtual speaker in space around the listener. Sounds are panned around the virtual speakers in the same way they would be panned around speakers in the real world, creating phantom images between the virtual speakers.

Individual Dolby Atmos objects are processed with their own HRTF function, increasing the spatial accuracy compared to playing in the bed channels, which uses phantom imaging panning between the virtual speakers to locate sounds in 3D space.

Additional resources:

HRTF: https://en.wikipedia.org/wiki/Head-related_transfer_function

Differences between games and linear media

There are some significant differences in the workflows to support Atmos for games versus linear content for home theater, even though they will use the same playback system.

Linear content is created for Dolby Atmos using a DAW sending audio and metadata to a Dolby Atmos renderer. Some DAWs such as Steinberg Cubase and Nuendo or Apple Logic Pro have Dolby Atmos rendering natively integrated, while others such as Avid Pro Tools can be connected to the Dolby Atmos Production or Mastering Suites. The final mix is exported as a master file such as ADM BWF or IMF IAB and then encoded to Dolby Digital Plus JOC, Dolby AC-4, or Dolby TrueHD for streaming or Blu-ray disc. Dolby Atmos for games is created at runtime (as the player plays the game) using an audio engine running as a component of a game engine, then passing the multi-channel audio streams to an AVR, soundbar, or other endpoint device containing a Dolby Atmos renderer. At the time of writing, most game audio engines cannot play back the files output by the linear Dolby Atmos content creation tools mentioned above.

There is a limit of 32 objects total (including bed channels) that can be transmitted over HDMI to an AVR, soundbar or TV. Linear media can intelligently group Atmos objects that occupy similar spatial positions into clusters, called spatial object groups in a pre-encoding process. Spatial object groups are a composite set of the original audio objects. Since game audio is dynamic it is rendered at runtime, realtime grouping of objects is not currently an option. Dynamic allocation of Atmos objects is not possible, so assigning which sounds go to objects must be thoughtfully managed by the Technical Sound Designer. If a sound is assigned to an object but an object is not available when it is called, it will be rendered in the 7.1.4 bed channels.

Game objects vs Atmos objects

Dolby Atmos objects are different than game objects. For example, Unity defines a game object as “the Base class for all entities in Unity Scenes”. From an audio perspective, game objects are thought of as an element that can have an associated sound emitter, such as a player character, creature, weapon or an animating prop in the world such as a fountain. A fountain would consist of a 3D mesh, a texture, a particle effect and a sound component. The sound component would include WAV files, and other meta-data associated with how that sound should be played back in the game such as amplitude roll-off over distance, occlusion and obstruction and orientation. Unlike linear media, where the movement of objects is fixed, information on the 3D position of the object in the world is translated by the game engine into panning information at runtime. It is not stored in the game object because it is continuously variable based on controller input from the gamer, which then updates the 3D coordinates of the object in space relative to the camera.

In the Atmos paradigm, which started out in cinema sound, a Dolby Atmos object consists of an audio stream (digital audio data) that is sent to the Dolby Atmos Renderer plus a metadata stream transporting the 3D coordinates, and size information. That Atmos object could be rendered down into the 7.1.4 bed, which to a game developer is exactly what happens when the sound from an associated game object is mixed into the final 7.1.4 output. The concept of keeping a sound separate from that final mix bus and sending it out as an individual audio stream (not in the 7.1.4 mix bus) is what is new and unique about Dolby Atmos objects from a game audio perspective.

Objects and positional accuracy

Dolby Atmos was originally developed for the cinema, a large box with many speakers around the walls and ceiling, with a large audience. The Dolby Atmos panner allowed objects to be panned to very specific co-ordinates throughout this large space, with more precision than a legacy 7.1 system. In a home theater environment, there could be height speakers and there may or may not be side speakers in addition to the rears, so the Dolby Atmos panner provides a bit more precision, but it is not as critical as in the cinema with many more speakers in the surround array.

It is Dolby Atmos for Headphones where Atmos Objects have significant value and impact for gamers. You can think of the 7.1.4 bed as a virtual 7.1.4 speaker layout. Each "speaker" has its own HRTF to place it at the correct point in space for a 7.1.4 layout. A sound that is panned between the left front and left side speaker would be a phantom image between the two virtual speakers. If the sound is then panned up, a big signal would be added to the left front height speaker as well, to make the phantom image be perceived as moving up. However, if the sound placed between the two speakers was a Dolby Object, it would get its own HRTF applied to it, instead of being a phantom image. This creates a much higher precision of location accuracy, especially if it is dynamically panned around the gamer. Assigning sounds related to enemies allowing for locating them more quickly for example, would improve tactical awareness.

Atmos fold downs

It is recommended that you create and mix your game for a 7.1.4 speaker configuration. If the endpoint does not support 7.1.4 configuration, the audio will be intelligently folded down to the end users channel format. This fold down will happen on the end users’ AVR for home theater or soundbar setups connected to consoles, or on a gamers PC. Technically, the 7.1.4 mix is rendered to 5.1 in the Dolby Atmos Renderer, and then downmixed to 2.0.

Additional information:

https://professionalsupport.dolby.com/s/article/How-do-the-5-1-and-Stereo-downmix-settings-work?language=en_US]

Supported platforms

Dolby Atmos for games is currently supported on Windows 10/11, Xbox One through Series X|S, PlayStation 5, iOS and Android.

Cost and Attribution

There are no additional licensing costs to support Dolby Atmos in games. On PC and Xbox rendering to Dolby Atmos is done at the OS level from the audio passed to Microsoft Spatial Sound from the game's audio engine.

For iOS and Android, the Dolby Atmos for Mobile plug-in for Wwise is free to download from https://games.dolby.com/

For PC and XBox please ensure that the "Supports Atmos" box is checked on the submission to Microsoft if your game supports Dolby Atmos (and Dolby Vision if applicable)

Dolby Atmos logos and requirements are available here: https://games.dolby.com/logos-guidelines/logos/

Contact games@dolby.com for further support or to explore co-marketing opportunities.

Studio Setup

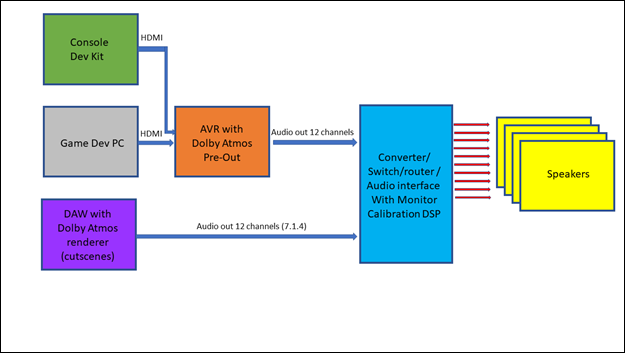

PC audio routing

Dev kit routing

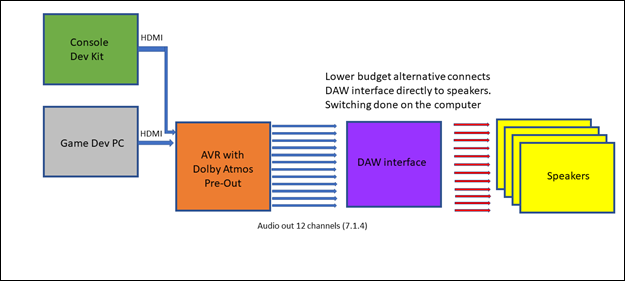

Monitoring without hardware controller

Dolby Atmos for Headphones configuration

Game audio developers should monitor their game in a 7.1.4 equipped studio during development, especially during the final mix. Not every member of the audio or QA team needs to have a full 7.1.4 studio, as Dolby Atmos for Headphones allows monitoring in spatial and is how many players will listen to the game.

Monitoring Dolby Atmos in game development

A 7.1.4 monitor controller is recommended for monitoring, either running in hardware or software. This will allow easy adjustments to volume, speaker soloing, and in some cases speaker EQ. Monitor controllers should allow monitoring the sound designers DAW, as well as at least 12 analog inputs for the 7.1.4 channels from the developers authoring PC, as well as console inputs for console development.

A common way to monitor the 7.1.4 audio channels coming from either a PC running audio middleware or a PC game is routing the digital output from the PC out of an HDMI output (found on certain video cards) into a Dolby Atmos compatible AVR (Audio Video Receiver) or Surround Processor with 12 pre-amp outputs (7.1.4). If the sound output on the PC is set to Atmos for home theater, the spatial output (either from the authoring tool or the game) will send an Atmos encoded bitstream to the AVR over HDMI (and DVI to HDMI). This will then be decoded and sent from the AVR preamps to the monitor controller analog inputs.

PC audio routing

Here are the steps to do this in Windows 10 using an HDMI output to a Dolby Atmos AVR (Audio Video Receiver):

- Go to the store, get the Dolby Access app

- Follow the onscreen instructions under the “Product” tab in Dolby Access to set up Dolby Atmos for home theater. This enables bitstream output over HDMI.

- Right click on the speaker icon in the taskbar and select Spatial -> Dolby Atmos for Home Theater

- If you don't see Spatial Audio as an option, ensure that you are using the correct audio device and that your AVR supports Dolby Atmos

- Verify that your AVR is receiving the Dolby Atmos bitstream by looking for the Dolby logo or text on the display of your AVR.

Dev kit routing

For console development, the HDMI output of the console development kit can be routed into a Dolby Atmos capable AVR or Processor and then out of the pre-outs and into a 7.1.4 capable monitor controller.

Monitoring without hardware controller

A more cost effective but potentially less convenient way to monitor using a monitor controller is to route the AVR pre-amp outputs into the audio inputs of a DAW audio interface and monitor within the DAW. For example, in Pro Tools you can create separate Aux tracks for each external source you want to bring in. Alternatively, create a single Aux and use the input routing to select the appropriate external source as needed. In Nuendo, the Control Room allows easy switching between different monitor inputs.

Dolby Atmos for Headphones configuration

For monitoring on Dolby Atmos for headphones instead of speakers, right click on the speaker icon in the Windows taskbar and select Spatial -> Dolby Atmos for Headphones. Please note that this will work for monitoring the output of your game or middleware output on a PC. Dolby Atmos for Headphones is installed through the Dolby Access app available on the Windows Store.

Additional information:

Integrating Atmos into Middleware

Support for Dolby Atmos is available for PC, Xbox One through Series X|S and mobile by using Audiokinetic's Wwise or Firelight Technologies FMOD audio middleware, which runs in Unreal, Unity, and other game engines.

Microsoft has created Microsoft Spatial Sound, a platform level solution for spatial sound which runs in the OS layer of Windows 10 and above and Xbox Series X|S. This solution takes a Spatial Sound output from middleware and passes it to the Dolby Atmos Renderer. This in turn outputs a Dolby Atmos bitstream to TVs, home theaters, and sound bars that support Dolby Atmos. It also supports spatial sound rendered by Dolby Atmos for Headphones.

On mobile, there are Dolby Atmos plug-ins for both Wwise and FMOD which support iOS and certain Android devices. https://games.dolby.com/atmos/mobile/

Additional information:

Wwise Configuration

Bus Setup

Channel order for multichannel audio

Wwise 2021.1 | PC or Xbox Series X|S

Wwise 2019.2 | PC or Xbox One to Series X|S

Wwise 2018.1 – 2021.1 | Mobile

Bus Setup

For games supporting Atmos a good start would be to set up three buses at the top level:

- Master bus - The Master Audio Bus will adapt to the initialized Endpoint configuration while taking into account the settings of the System Audio Device. For example, if on a PC the user right clicks on the speaker icon in the task bar and chooses Dolby Atmos for Headphones they will get 7.1.4. If it is set to stereo, it will be 2.0

- Main Mix - If Dolby Atmos is activated, the Bus Configuration of the Main Mix used by the System Audio Device will be set to 7.1.4.

- Audio objects - individual buses for each Atmos audio object. Maximum of 20 for Dolby Atmos over HDMI, and 16 for Dolby Atmos over headphones. In the Audio Device settings. if 3D Audio is allowed and System Audio Objects are enabled, Wwise will deliver sounds routed as Audio Objects and their Metadata (position, volume, etc.) to Dolby Atmos for processing.

- Passthrough – A stereo bus for audio that will not be spatialized, such as music or UI sounds.

Child Audio Buses can be configured to explicit channel configurations to meet the needs of dynamic mixing and mix optimization. These additional sub-buses, for various categories such as ambiences, dalog, weapons could be lower in the hierarchy, inheriting the parents' bus properties and configurations.

Additional information

Channel order for multichannel audio

Before you use a multichannel source file in a Wwise project, make sure the channel order is correct.

The channel order used by Wwise is different from the channel order of Dolby Atmos. Follow the Wwise channel order (L, R, C, LFE, BL, BR, SL, SR, HFL, HFR, HBL,HBR). It is recommended that you use the Multi-Channel Creator from Wwise to create multichannel audio files.

Wwise 2021.1 | PC or Xbox Series X|S

Wwise 2021.1 supports spatial sound and objects natively. Wwise then passes these audio streams through the Microsoft Spatial Sound API to the OS layer. Here the Dolby Atmos Renderer converts those streams to the Dolby Atmos bitstream which is then sent to endpoint such as a soundbar, TV or headphones.

Audio Devices -> Default Work Unit -> System will auto configure to match the channel configuration of the endpoint. For example, if the gamer has a Dolby Atmos capable home theater or sound bar that supports 7.1.4, it will receive all those channels. If they have a Dolby Digital 5.1 system, that is the bitstream they receive. If their endpoint is a pair of headphones with Dolby Atmos for Headphones enabled, they will get a binaurally encoded stereo stream.

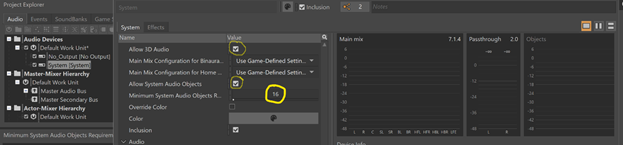

- Double click "System" (under "Audio Devices" ->"Default Work Unit")

- Ensure that the "Allow 3D Audio" checkbox is enabled on the Audio Device.

- Set Minimum System Objects to 16. Note: Failure to do this will cause all objects to be pushed to the beds when using Dolby Atmos, and not be rendered as individual Dolby Atmos objects.

For Xbox and PC, set the number of objects to 16 (or 15 if you anticipate the user may choose to use Windows Sonic instead of Dolby Atmos).

This is the safest setting because Dolby Atmos over HDMI has a maximum of 20 objects, and Dolby Atmos for Headphones a maximum of 16. Windows Sonic has a maximum of 15.

System Audio Object Allocation

There are a limited number of system audio objects available for a given platform. System Audio Objects are allocated using first-in, first-out prioritization. You need to carefully manage what objects are being used depending if you want them available for playback via the Wwise authoring application or in the game (if it is running on the same PC). If the system runs out of objects, they will be assigned to the bed channels instead of being rendered as individual objects.

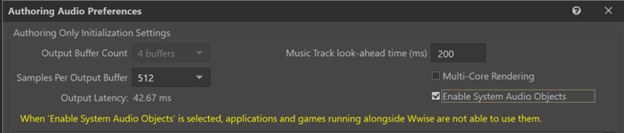

Application Usage of System Audio Objects

If monitoring through Wwise, Check the “Enable System Audio Objects” checkbox, else uncheck if monitoring in-game on the same machine. This can be found under the Audio menu, in Authoring Audo Preferences.

- Objects are assigned at the Windows OS layer. If you have the box checked and are monitoring through the game running on the same machine, Wwise will grab all the objects, leaving none for the game to use.

- If you are connected to and monitoring from an Xbox dev kit, you can leave this checked because you will be listening to the objects assigned on the Xbox dev kit.

Additional resources:

https://www.audiokinetic.com/library/edge/?source=Help&id=audio_preferences

Allows Wwise to reserve System Audio Objects, preventing any game or application running alongside Wwise from acquiring them. If you are trying to audition System Audio Objects from within Wwise, this option should be enabled.

If you are trying to hear System Audio Objects from a game running alongside Wwise on the same PC, this option should be disabled. This prevents Wwise from reserving system objects, allowing your game to acquire them. The result is that any Audio Object that would normally have been sent out from Wwise as a System Audio Object will instead be mixed to the Main Mix.

On Windows, there is a limited number of Microsoft Spatial Sound objects that must be shared across all currently running processes, including games and applications.

Set up buses

Main Mix bus setup:

- Under the Audio tab, Master-Mixer Hierarchy -> Default Work Unit -> Master Audio Bus, right click and select "New Child -> Audio Bus. Name the new bus appropriately, "Main Beds" for example, or whatever makes sense to you.

- Under "General Settings" tab in the Audio Bus Property Editor, set the Bus Configuration to "Same as main mix", which will set the bus output configuration to whatever the System has self-configured itself to, based on the endpoint.

- Route sounds from the Actor-Mixer hierarchy intended for the Main Mix to this bus. These will go to the bed channels.

Object Audio setup:

- Under the Audio tab, Master-Mixer Hierarchy -> Default Work Unit -> Master Audio Bus, right click and select "New Child -> Audio Bus. Name the new bus appropriately, "Objects" for example, or whatever makes sense to you.

- Under "General Settings" tab in the Audio Bus Property Editor, set the Bus Configuration to "Audio Objects".

- Route sounds from the Actor-Mixer hierarchy that should be individual Atmos Objects (opposed to being in the main bed) to the “Objects” Bus.

- Keep in mind that these are limited to 20 objects for Atmos of HDMI, and 16 for Dolby Atmos for Headphones & built-in speakers.

Passthrough setup

- Step 1: Create an Audio Bus as a child of the Master Audio Bus called "Passthrough"

- Step 2: Set the Bus Configuration to "Same as Passthrough Mix".

- Step 3: Route audio which should not be spatialized (which applies filters) to the “Passthrough” Bus. Music is an example of something you would route through this bus.

Submixes for various categories of sounds can be set up to inherit the configuration of the parent bus if the bus configuration is set to "Same as Main Mix" in the "General Settings" Tab

Note:

- System Audio Objects are allocated using first-in, first-out prioritization. You need to carefully manage what objects are being used. If the system runs out of objects, they will be assigned to the bed channels.

- For Xbox and PC, set the number of objects to 16 (or 15 if you anticipate the user may choose to use Windows Sonic instead of Dolby Atmos).

- This is the safest setting because Dolby Atmos over HDMI has a maximum of 20 objects, and Dolby Atmos for Headphones a maximum of 16. Windows Sonic has a maximum of 15.

Additional information:

- Audiokinetic Blog | https://docs.microsoft.com/en-us/windows/win32/coreaudio/spatial-sound

Wwise 2019.2 | PC or Xbox One to Series X|S

This 2019 version of Wwise did not yet have native support for Microsoft Spatial Sound (like 2021). Therefore you need to install the Microsoft Spatial Sound Platform plugin for Wwise 2019.2 from the Wwise launcher, found under the Mixer Plug-ins tab. This plug-in will handle routing the audio out to the Windows Core OS. where the Dolby Atmos renderer converts the spatial audio to a Dolby Atmos bitstream for HDMI or spatializes it for Dolby Atmos for Headphones

Set up buses

Wwise 2019 requires two Master buses: one using the Microsoft_Spatial_Sound_Output device for spatial output and one set to System device for "passthru". You do not need to specifically set the channel configuration for each master bus as the device plugins will take care of that automatically.

Select Master -Mixer Hierarchy ->Default Work Unit -> Master Audio Bus

In the Property Editor, under "Audio Device" select "Microsoft Spatial Sound Platform Output". If it is not available, make sure you have downloaded the plug-in.

Create a second Master bus for the passthrough channels:

Main Mix bus setup:

- Under the Audio tab, Master-Mixer Hierarchy -> Default Work Unit -> Master Audio Bus, right click and select "New Child -> Audio Bus. Name the new bus appropriately, "Main Beds" for example, or whatever makes sense to you. You could create multiple 7.1.4 sub-mixes all feeding the Master Bus. Sub-buses can be set up that inherit the Master Mix Bus configuration.

- Under General Settings -> Channel Configuration select "Parent"

- Route sounds from the Actor-Mixer hierarchy intended for the Main Mix to this bus. These will go to the bed channels.

Passthrough setup

- Right click on Mixer Hierarchy ->Default Work Unit and select New Child -> Audio Bus

- Rename this bus to “Passthru” and ensure the Audio Device is set to “System”

- This sets up the Master Audio Bus output to a 7.1.4 channel configuration

- Route audio which you do not want spatialized (which applies HRTF filters) to the “Passthrough” Bus. Music is an example of something you would route through this bus.

Route your audio to the appropriate bus.

Submixes for various categories of sounds which need to be sent to the bed channels can be set up to inherit the configuration of the parent bus if the bus configuration is set to "Same as Main Mix" in the "General Settings" Tab

Note:

Dolby Atmos Objects are not supported in Wwise 2019

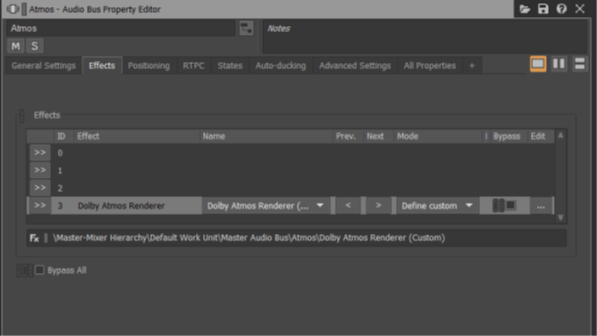

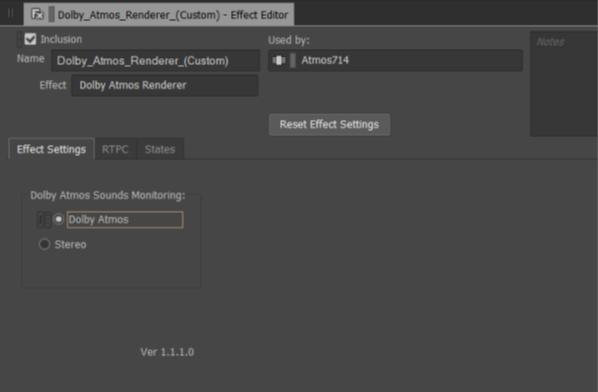

Wwise 2018.1 – 2021.1 | Mobile

Access the Dolby Atmos plug-in for Wwise from here: https://games.dolby.com/atmos/mobile/sign-up/

The Dolby Atmos for mobile gaming plug-ins consists of an Atmos and a Stereo plug-in. The Dolby Atmos Renderer plug-in is used to monitor the 7.1.4 bus (bed channels) in binaural while authoring. In the game, it passes the 7.1.4 bus to the end device for rendering. The Dolby Stereo plug-in has no audio processing while authoring. In the game it passes the audio channels that do not need Dolby Audio Processing to the end device while matching the latency of the 7.1.4 bus.

Place the Dolby Atmos for Mobile files (.dll and .xml) in the appropriate folder:

<Wwise installation directory>/Authoring/x64/Release/bin/plugins>

The Wwise sound engine checks the plugin compatibility with Wwise versions during initialization. Make sure the Dolby Atmos for mobile gaming plug-ins are compatible with the version of Wwise you use. For the 2019 version of Wwise, please use the plug-ins in the 2018 folder.

When you open your Wwise project, make sure you have enabled Android and iOS for the platforms.

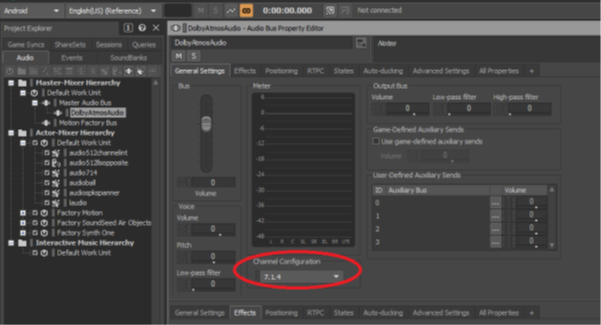

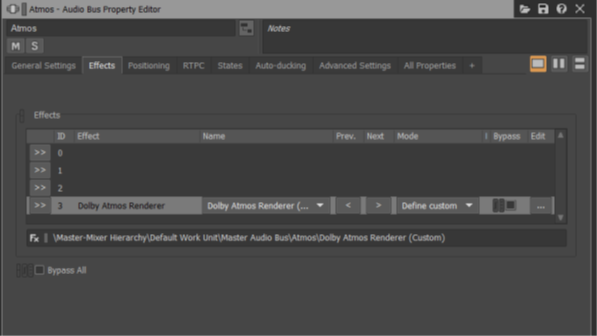

Main Mix bus setup:

- Under the Audio tab, Master-Mixer Hierarchy -> Default Work Unit -> Master Audio Bus, right click and select "New Child -> Audio Bus. Name the new bus appropriately, "Main Beds" or “Main Mix” or “DolbyAtmosBed” for example, or whatever makes sense to you.

- Under the General Settings tab, Set the Channel Configuration to 7.1.4

- On the Effect tab for the audio bus, add the Dolby Atmos plug-in as an effect.

- For the bed channels, open the Plug-in editor and under the “Effect Settings” tab set Dolby Atmos Sounds Monitoring to “Dolby Atmos”

- You could create multiple 7.1.4 sub-mixes all feeding the Main Beds Bus. Sub-buses can be set up that inherit the Main Beds bus configuration.

- Route sounds from the Actor-Mixer hierarchy intended for the Main Beds to this bus. These will go to the bed channels.

Passthrough setup

- Under the Audio tab, Master-Mixer Hierarchy -> Default Work Unit -> Master Audio Bus, right click and select "New Child -> Audio Bus. Name the new bus appropriately, "Passthrough" for example, or whatever makes sense to you.

- Under the General Settings tab, Set the Channel Configuration to 2.0

- On the Effect tab for the audio bus, add the Dolby Atmos plug-in as an effect

- Route audio which should not be spatialized (which applies HRTF filters) to the “Passthrough” Bus. Music is an example of something you might route through this bus if you don’t want it spatialized.

- You could create multiple stereo sub-mixes all feeding the Passthrough Bus. Sub-buses can be set up that inherit the Passthrough Bus configuration. For example, you might have a UI and a Music bus.

Note:

- Dolby Atmos Objects are not supported in Wwise 2019 Mobile at the time of writing.

- Avoid putting a plug-in after the DolbyAtmosRenderer/DolbyStereoRenderer plugin in the effects section. Doing so will result in Atmos processing being ignored

Additional information

- Information and detail are in this document, which is zipped up with the Dolby Atmos for mobile plug-ins: integguideWwisedolbyatmospluginsmobilegame.pdf

- https://www.audiokinetic.com/library/edge/?source=Help&id=building_structure_of_output_buses

FMOD Configuration

FMOD Studio 2.0 | PC or Xbox Series X|S

Bed channels panning

Dolby Atmos Objects panning

FMOD Studio 2.0 | PC or Xbox Series X|S

Supporting Atmos in FMOD starts with setting up the project to support multi-channel output through the Windows Sonic Driver, which is Microsoft’s platform-level solution for spatial sound support on Xbox, Windows and HoloLens 2. This driver will route multi-channel audio to the OS layer on PC or Xbox, where the Dolby Atmos renderer will convert it into a Dolby Atmos bitstream to send over HDMI, or to be spatialized for headphones using Dolby Atmos for Headphones. Right click on the speaker icon in the Windows task bar to select Dolby Atmos for Home Theater for an Atmos bitstream that is output over HDMI, or Dolby Atmos for Headphones for virtual surround sound on any set of headphones. (Install this functionality with Dolby Access)

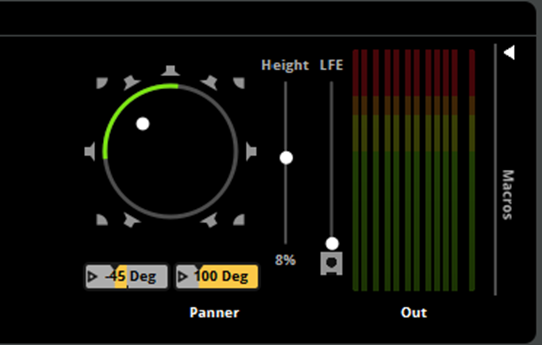

Bed channels panning

In project preferences (Edit -> Preferences), confirm that the audio driver is set to Windows Sonic. Then select the 7.1.4 Output configuration in the master bus, as well as any other events or buses that will be panned in the z axis (such as Dolby Atmos objects). Do this by right clicking on the Output bus meters on the right of the Master track. The meters in the Master Track should show 12 channels (7 planar surround, 4 height, 1 LFE). In FMOD Studio the height parameter (Z axis) is on a separate “Height” slider, next to the X/Y panner. A value of 1 will push the sound all the way into the height speakers, 0 will leave it at the planar level. A value of -1 brings the height channels down to the planar level.

Additional information:

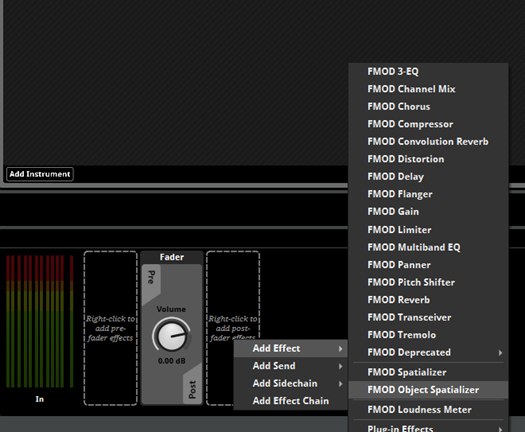

Dolby Atmos Objects panning

Dolby Atmos Objects are routed out on separate buses from the main bed channels. On the PC and Xbox One, 20 Atmos objects are available for playback using HDMI, and 16 for Dolby Atmos for Headphones, so it is safest to manage your voice count with a maximum of 16 objects. If there are no more objects available when needed at runtime, the object will fallback to being rendered in the bed channels.

Routing to the Dolby Atmos Object buses is done by instantiating an FMOD Object Spatializer on the Master track as a post fader effect. Adding the Object spatializer will bypass the regular panner and master bus and route directly on its own bus to the Dolby renderer in the OS. Note this will bypass any mastering plug-ins on the master bus.

Additional information

Atmos Plug-in for Unreal Engine

As an extension to the Unreal Engine, the Dolby Atmos plug-in for Unreal Engine (Beta) embeds within the controls of the Unreal Editor user interface. The plug-in provides access to enhanced Dolby spatial audio processing for game development and design on Windows 10, Xbox Series X|S and Android platforms.

There are a few steps to access the plug-in:

- Create an account on games.dolby.com (Opens in a new window)

- Review and Sign the EULA for using the plug-in

- Download the plug-in

Click the link below to get started!